Hallo daar! Ik ben .BB, een robot demonstrator. Ik ga gericht over de stranden om klein zwerfvuil op te sporen en op te ruimen. Tijdens het rijden zal ik zwerfvuil 'zien', omdat ik dit kan herkennen aan de hand van een getraind algoritme.

Laat me je vertellen wie ik ben!

Hallo daar! Ik ben .BB, een robot demonstrator. Ik ga gericht over de stranden om klein zwerfvuil op te sporen en op te ruimen. Tijdens het rijden zal ik zwerfvuil 'zien', omdat ik dit kan herkennen aan de hand van een getraind algoritme.

Ik gebruik een Convolutional Neural Network (CNN), een zelflerend algoritme dat verbanden kan leggen. Om mijn algoritme te voeden is het erg belangrijk om mij te voorzien van een grote hoeveelheid trainingsdata. De trainingsgegevens moeten divers zijn, zodat ik zwerfvuil kan zien in alle lichtomstandigheden en in alle soorten en maten.

Ik zal straks steeds beter kunnen inschatten wat voor zwerfafval ik heb aangetroffen. Het probabilistische algoritme geeft de kans aan. Als ik bijvoorbeeld met 80% zekerheid weet dat er een blikje voor mij ligt, dan neem ik het mee.

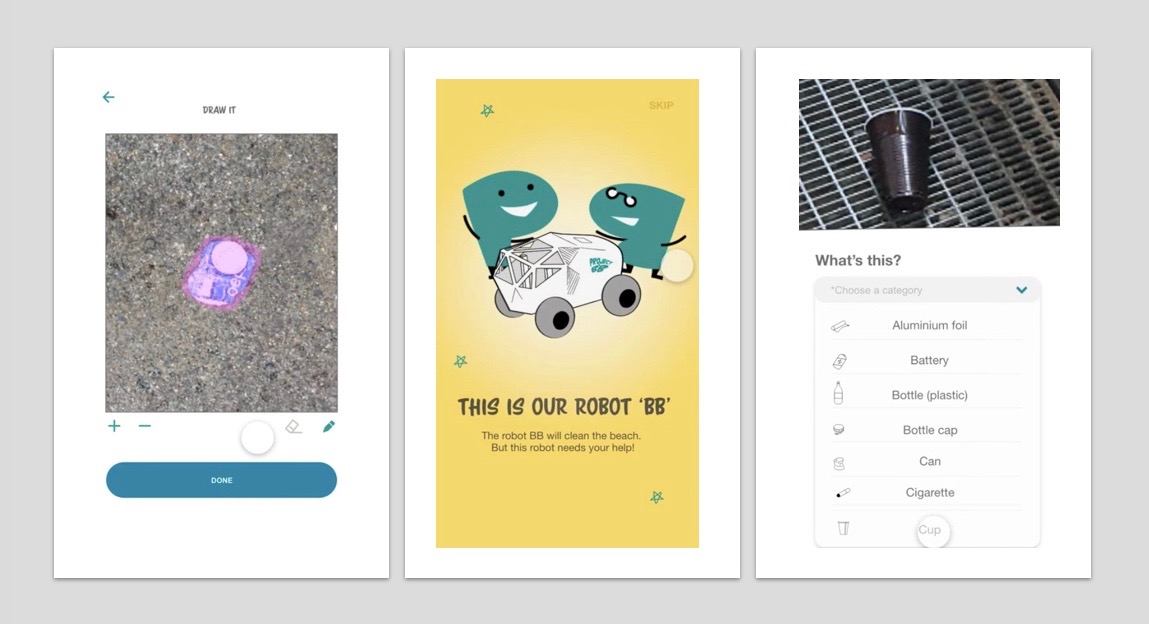

Als ik niet zeker weet wat voor soort item er voor me ligt, dan maak ik een foto met een GPS-tag. Ik zal het publiek vragen om mij online te adviseren via een mobiele app en via een mixed reality-spel. Ik zal je vragen "wat is dit?" En zal dan vragen om de foto te annoteren en te labelen. Deze informatie wordt teruggestuurd naar mij, zodat jij mij slimmer maakt.

Naast het opruimen van zwerfvuil is mijn belangrijkste toegevoegde waarde het verzamelen van data. Door mij een functie te geven in de openbare ruimte en doordat ik de interactie zoek met mensen, wil ik ook bijdragen aan de maatschappelijke discussie en bewustwording rondom de effecten van zwerfvuil.

Check voor meer informatie de FAQ of neem contact met ons op!